15 Kinds of Bogus Citations

Classic ways to self-servingly screw up references to science, like “the sneaky reach” or “the uncheckable”

![Photo of the inside of someone’s wrist, with the text “[citation needed]” — as often seen on Wikipedia — tattooed just below the heel of the hand.](/assets/images/citation-needed-tattoo--sq-560x560-35k.jpg)

Now that’s dedication to scholarship! The statement “[citation needed]” is a Wikipedia convention, often seen following assertions of uncertain accuracy. It’s a signal to editors to find a citation if they possibly can, and to visitors that a statement might not be true.

Just because there are footnotes does not make it true.1 Citations to “scientific evidence” are not inherently trustworthy. In fact, they are often misleading and scammy. Referencing theatre is all about creating the appearance of scholarship, credibility, and substantiation with many types of bogus citations that can’t actually deliver those things.

For example, I got an email from a reader keen to make a point. He included a reference to support it, which is unusual and welcome — most people are not that diligent. Unfortunately for his credibility, I actually checked that reference, and I quickly realized that the paper did not actually support his point. Not even close. It wasn’t ambiguous.2

Surely that’s just an amateurish mistake? Well, yes — but that counts, and even professionals and academics are surprisingly careless with their citations, or even reckless. The phenomenon of the bogus citation is a tradition in genuine science and earnest science journalism, as well as being standard in pseudoscientific marketing. The milder forms of bogus citations are epidemic, while more serious ones crop up cringe-inducingly often even the most unlikely places. Many working scientists “pad” their papers and books with barely relevant citations. They probably pulled the same crap with their term papers as undergrads!

Worst of all, there is now an actual industry — a huge industry — of both junky and actually fraudulent journals34 (much more about this below) providing an endless supply of useless citations. It is now easy to find “support” for “promising” evidence of anything at all — and no one will know the difference unless they are research-literate and diligent enough to check.

And that was all true before artificial intelligence made all of this so much easier. AI tools not only greatly facilitate all the old human-motivated scholarly corner-cutting and truth-bending, but add their own supercharged talent for inventing citations. It’s impossible to overstate how much of a problem this is after just a couple years, and it’s growing so fast I don’t think anyone even knows how fast it’s growing.

“A promising treatment is often in fact merely the larval stage of a disappointing one. At least a third of influential trials suggesting benefit may either ultimately be contradicted or turn out to have exaggerated effectiveness.”

Bastian, 2006, J R Soc Med

This crap gives science a black eye. But it’s not that science is bad — it’s just poorly behaved humans (and AI) making real science look bad. One study has never been able to prove anything, but now more than ever we need to see and check multiple high quality citations for a key point before we can trust them. Nothing can actually be “known” until several credible lines of research have all converged on the same point.

[better citation needed]

15 common wrong ways (at least!) to cite

The right way to cite scientific evidence is to link to a relevant study with no glaring flaws or limitations. It has to be up for the job, and actually exist in a real and half-decent journal. But if you don’t have one of those? Use one of these …

1. The old-school fake

Just make it up! Good old fraud — and carelessness right up to the point where it might as well be fraudulent. No one actually checks these things anyway, right? Even scientists are doing it! There have been cases of extensive phantom citations to papers that never existed.

2. The AI fake

Get AI to generate your citations. No human can make things up like large language models, the engines of artificial intelligence. AI is now super-charging the phenomenon of fake citations in many contexts. For instance, Jonathan Jarry found only 5 real citations out of 65 he checked in a batch of AI-slop health videos for seniors.5 2025 is only the first year this became a problem; it will get worse.

3. The junk cite

Cite junk science in a real-but-terrible journals, the original home of pseudoscience. Such evidence is technically “peer-reviewed,” but peer-reviewed badly by hacks and quacks preaching to a choir of highly biased and/or unqualified peers. These journals exist just to publish what real journals won’t touch, and alt-med has had hundreds of them for decades now. The emphasis in alt-med research is on setting the bar low enough to get over it without anyone but those nasty skeptics noticing. And it works!

4. The spam cite

Reference to junk science that is not actually peer-reviewed at all — published in a fraudulent “predatory journal” that will publish literally anything they are paid to publish. Many of these exist in a gray zone between merely low-quality and actually fraudulent, and it can be hard to tell the difference, but a lot of them are true scams. The growth of this category has been explosive since 2010..

5. The clean miss

Cite something that is topically relevant but which simply doesn’t actually support your point. It looks like a perfectly good footnote, but where’s the beef? Papers that consist of nothing but mechanism masturbation are a common example: superficially substantive, detailed speculation about how something works in the absence of any actually evidence of efficacy (or even plausibility). Fascia research, for instance, is notoriously rotten with mechanism masturbation.

6. The backfire

Citing science that actually undermines the point, instead of supporting it. This is impressively common! People don’t read the fine print. This is often a seemingly honest mistake insofar as the abstract actually does seem to be good news — but abstracts are about as reliable and nuanced as headlines, and they often spin negative results so hard that they come out sounding positive. However, people are often so careless with citations that they miss or ignore blatantly negative results, and bizarrely present them as “proof” of their point.

7. The sneaky reach

Make a bit too much of evidence that is actually good and relevant … probably without even realizing it yourself. Even good science has limits, and individual studies, or even small sets of studies, are routinely inadequate. But one to three positive studies, often cherry-picked from a body of evidence with more mixed results, are not telling the whole story. The great thing about the "sneaky" reach is that it tends to preserve plausible deniability, and only seems bogus to unusually well-informed readers.

8. The big reach

Make way too much of otherwise good evidence. This can go so far beyond the sneaky reach that it becomes a different thing, a blatant attempt to cite a study that just isn't just underpowered for the job, but is actually wrong in principle for the job. An extremely common example of this type of citation is using evidence of correlations to support beliefs and assumptions about causes.

9. The curve ball

Cite science that just has little or nothing to do with the point. It’s surprising how often this happens. This is almost exactly like a fake citation in spirit — it just uses a citation that actually does exist.

10. The bluff (A.K.A. “the name drop”)

A citation selected at random, but specifically from a famous scientific journal like The New England Journal of Medicine… because no one actually checks references, do they? NASA studies are a popular source for bluff cites (more below).

11. The ego trip

Cite your own work … which in turn cites only your own work … and so on, round and round! Ironically, people often accuse me of using this one.6

12. The uncheckable

Citing a chapter in a book no one can or would ever want to actually read, because it has a title like Gaussian-Verdian Analysis of Phototropobloggospheric Keynsian Infidelivitalismness… and it’s been out of print for decades. And it’s in Russian. But it’s real!

13. The lite cite

Case studies and other tarted up anecdotal evidence are a nice way to cite lite. The most notorious example in the pain field is "boot nail guy," frequently used to support claims that pain can be psychosomatic, and it’s a real citation, to a major medical journal … but it’s just a professional anecdote.7

14. The dump cite

Cite in bulk! A reference dump is meant to overwhelm rhetorical opposition with sheer volume — too many to meaningfully evaluate or respond to. Sort of like the Gish gallop.

15. The zombie

Citing a paper that was retracted and/or definitively debunked long ago, but refuses to die, and continues to get cited as though it is still legit — and these citations certainly look that way to most unwary readers. There are many classic examples.

•

What if citations avoid all of these pitfalls? They can still be bogus!

Genuine and serious research flaws are often actually or effectively invisible. Famously, “Most Published Research Findings Are False,”8 even when there’s nothing obviously wrong with them. It’s amazing how many ways “good” studies can still be bad, how easily they can go wrong, or be made to go wrong, by well-intentioned researchers trying to prove pet theories.9 Physical medicine and pain medicine are both plagued by junky, underpowered studies with “positive” results that just aren’t credible — all they do is muddy the waters.10

So beware of citations to a smaller number of small studies… even if there’s nothing obviously wrong with them.

The rest of the article is devoted to elaborations, examples, and more complex concerns about citation quality — and there are plenty of those.

So many times I’ve been intrigued by the abstract of a scientific paper, dug into the whole thing, and COULD NOT FIND THE REST OF THE PEPPERONI.

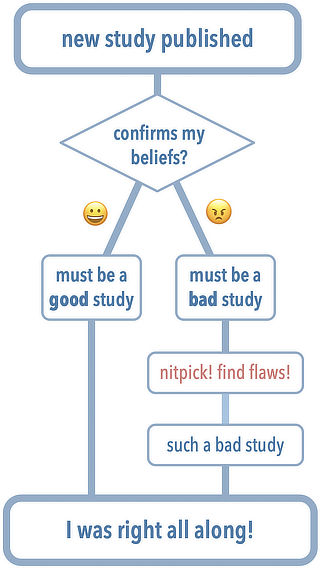

Cherry-picking and nit-picking

Citations are often “cherry picked” — that is, only the best evidence supporting a point is cited. The citations themselves may not be bogus, but the pattern of citation is, because it’s suspiciously lacking contrary evidence.

Less familiar: advanced cherry pickers also exaggerate the flaws of studies they don’t like (to justify dismissing them). Such nitpicking can easily seem like credible critical analysis, but it’s easy to find problems with even the best studies. Research is a messy, collaborative enterprise, and perfect studies are as rare as perfect movies. When we don’t like the conclusions, we are much likelier to see research flaws and blow them out of proportion. It works like this…

No one is immune to bias, and evaluating scientific evidence fairly is really tricky. At this point you might be wondering how we can ever trust any citations. But it gets even worse…

The life cycle of bogus citations

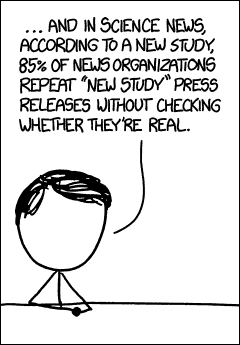

I’ll now pass the baton to geek-cartoonist Randall Munroe to explain how bogus references proliferate:

Munroe made a highly relevant comment about that comic:

I just read a pop-science book by a respected author. One chapter, and much of the thesis, was based around wildly inaccurate data which traced back to … Wikipedia. To encourage people to be on their toes, I’m not going to say what book or author.

A weird bonus bogus citation type: NASA studies

Any time you hear that something in health care is allegedly “based on a NASA study,” it’s probably at least partially bullshit. Its importance is being carelessly or deliberately inflated, often for commercial purposes, by leveraging the power of the NASA brand.

The finding often does not actually come from NASA at all, so it’s a “name drop” citation. Even if it did come from NASA, it also probably doesn’t really support the claim being made (a “big reach”), and/or it’s not even relevant at all (a “clean miss”). Many people are so dazzled by the idea of NASA as a source that they will cite their research if it’s even remotely connected to what they care about.

NASA does fund a lot of research, but they do not magically produce better research than other organizations. Emphasizing that they funded a study usually means someone isn’t focusing on what matters: results, not sources.

Mechanism masturbation

“Mechanism masturbation” is wishful and fanciful thinking about why/how treatments might work. Science journalist Jonathan Jarry (once upon a time on a place called “Twitter,” now removed, but you can listen to an interview about it):

“There is a fascinating phenomenon in the complementary and alternative medicine literature we could call ‘mechanism masturbation’ where the authors, faced with the tiniest of positive signals in a small study, write paragraph after paragraph hypothesizing how, mechanistically, watermelon seeds might cure schizophrenia.”

Musculoskeletal and pain research, alternative as well as more mainstream research, is rotten with “mechanism masturbation” — often because it’s just all there is. There’s no good clinical trial data, so we get wishful thinking and wild speculation instead, even in scientific publications. The field is surprisingly afflicted with cart-before-horse speculation about how they how they could work, might work, should work, maybe work… when the clinical trials (if they exist at all) tend to show that they don’t actually work, or not very well.

Jonathan’s satirical example really nails the flavour of “research” like this:

“It might interfere with the hypothalamic-pituitary-adrenal axis… One of its compounds does bind to alpha receptors in this cell type… Could play a role in this cascade... Anyway, preliminary results from n = 6. More studies needed!”

Yes, that definitely reads like about a thousand papers I’ve wasted my time reading over the last decade. I’m always looking for the rare scraps of basic science I can actually consider interesting/promising instead of more post-hoc rationalization for someone’s meal ticket. 🙄

Confession: I have used bogus citations myself occasionally, by accident

I work my butt off to prevent bogus citations on this website, but I’m not perfect, it’s a hard job, and I’ve been learning the whole time I’ve been doing it. Sometimes I read a scientific study that I wrote about long ago and I think, “Was I high?” It’s usually clear in retrospect that it was a straightforward case of wishful interpretation — reporting only the most useful, pleasing, headline-making interpretation of the study — but once in a while I find one so wrong that my rationale for ever using it is just a head-scratcher.

This is not a common problem on PainScience.com, but it’s wise to acknowledge that I also crap out the occasional bogus citation, and I’ve probably unfairly demoted some studies I didn’t like. No one is immune to bias. The only real defense is a relentless and transparent effort to combat citation bogusity.

Dozens of studies can still be wrong

“Everyone” knows that you can’t trust just one study … but even dozens of studies can still add up to diddly and squat. Scott Alexander back in 2014:

I worry that most smart people have not learned that a list of dozens of studies, several meta-analyses, hundreds of experts, and expert surveys showing almost all academics support your thesis … can still be bullshit. Which is too bad, because that’s exactly what people who want to bamboozle an educated audience are going to use.

I worry about that too. The whole piece is highly recommended dorky reading: “Beware the Man of One Study.”

Today there is mind-bogglingly more junk science available to cite than ever before, because of the “predatory journal” crisis

Pseudoscience and crappy journals have always existed. But it’s worse now. Much worse.

There are now at least several thousand (bare minimum) completely fraudulent scientific journals that exist only to take money from gullible and/or desperate academics who must “publish or perish.” It’s a scam that has exploded into a full-blown industry on an almost unthinkable scale since the 1990s. These so-called journals collectively publish millions of papers annually with “no or trivial peer review, no obvious quality control, and no editorial board oversight.”11 Oy.

Tragically, most of the papers they publish have every superficial appearance of being real scientific papers. The substantive difference is that real scientific papers are peer-reviewed, and these papers are not! But the authors are actual scientists, who work at real organizations, who actually care about their research and their careers — if they didn’t, they wouldn’t pay predatory journals to publish their papers! Some of their research might even be half decent, and might have even made it into a real journal. But only some. Much of it is blatantly incompetent, fraudulent, or just daft, and wouldn’t have had a snowball’s chance in hell of being accepted by a real journal. Even most of the better ones wouldn’t cut it at a real journal, or at least not without significant improvements — because that’s what peer review is all about, separating the wheat from the chaff.

But it’s easy to get published in one of these predatory journals, no matter how bad your paper is. They will publish literal gibberish — this has been demonstrated repeatedly — as long as you pay them. But the real problem is that they publish so many papers that look like real science to the casual observer.

All of this not-peer-reviewed research now constitutes a substantial percentage of the papers I have to wade through when I’m doing my job, trying to get to the truth about pain science. When I complain that there’s a lot of junky science out there, I don’t just mean that there’s some low quality science — which was true long before predatory journals existed — I mean that the scientific literature is severely polluted with actual non-science, with an insane number of papers that were published under entirely false pretenses, the fruit of fraud.

This isn’t just a “problem” for science—it’s a rapidly developing disaster that “wastes taxpayer money, chips away at scientific credibility, and muddies important research.”

Examples of bogus citations in the wild

A clean miss of a clean miss!

Here’s a rich example of a “clean miss” double-whammy, from a generally good article about controversy over the safety and effectiveness of powerful narcotic drugs. The authors are generally attacking the credibility of the American Pain Foundation’s position, and in this passage they accuse the APF of supporting a point with an irrelevant reference — a “clean miss.” But the accusation is based on a citation that isn’t actually there — a clean miss of their own! (Not that I could find in a good 20 minutes of poring over the document. Maybe it’s there, but after 20 minutes of looking I was beginning to question the sanity of the time investment.)

Another guide, written for journalists and supported by Alpharma Pharmaceuticals, likewise is reassuring. It notes in at least five places that the risk of opioid addiction is low, and it references a 1996 article in Scientific American, saying fewer than 1 percent of children treated with opioids become addicted.

But the cited article does not include this statistic or deal with addiction in children.

Actually, after a careful search, I can find no such Scientific American article cited in the APF document at all. So the APF’s point does not appear to be properly supported, but then again neither is the accusation.

SleepTracks.com tries to sell a dubious product with bogus references to science

I was making some corrections to my insomnia tutorial when I curiously clicked on an advertisement for SleepTracks.com, where a personable blogger named “Yan” is hawking an insomnia cure: “brain entrainment” by listening to “isochronic tones,” allegedly superior to the more common “binaural beats” method.

Yan goes to considerable lengths to portray his product as scientifically valid, advanced and modern, and he actually had me going for a while. He tells readers that he’s done “a lot of research.” To my amazement, he even cited some scientific papers. I had been so lulled by his pleasant writing tone that I almost didn’t check ‘em.

“Here are a few sleep-related scientific papers you can reference,” Yan writes, and then he supplies these three references:

- EEG correlates of sleep: Evidence for separate forebrain substrates. Brain Research, 6, 143-163. Sterman, M.B., Howe, R.C., and MacDonald, L.R.

- Treating psychophysiologic insomnia with biofeedback. Arch Gen Psychiatry. ;38(7):752-8. Hauri, P.

- The treatment of psychophysiologic insomnia with biofeedback: a replication study. Biofeedback Self Regul. (2):223-35. Hauri PJ, Percy L, Hellekson C, Hartmann E, Russ D

Notice anything odd there? They lack dates. Hmm. I wonder why? Could it be because they’re from the stone age?

Those papers are from 1967, 1981, and 1982. In case you’ve lost track of time, 1982 was 44 years ago — not exactly “recent” reseach, particularly when you consider how far neuroscience has come in the last twenty years. Now, old research isn’t necessarily useless, but Yan was bragging about how his insomnia treatment method is based on modern science. And the only three references he can come up with pre-date the Internet by a decade? One of them pre-dates me.

Clearly, these are references intended to make him look good. Yan didn’t actually think anyone would look them up. Yan was wrong. I looked them up. And their age isn’t the worst of it.

The 1981 study had negative results. (The Backfire!) The biofeedback methods studied — which aren’t even the same thing Yan is selling, just conceptually related — didn’t actually work: “No feedback group showed improved sleep significantly.” Gosh, Yan, thanks for doing that research! I sure am glad to know that your product is based on thirty year-old research that showed that a loosely related treatment method completely flopped!

The 1982 study? This one actually had positive results, but again studying something only sorta related. And the sample size? Sixteen patients — a microscopically small study, good for proving nothing.

The 1967 study? Not even a test of a therapy: just basic research. Fine if you’re interested in what researchers thought about brain waves and insomnia before ABBA. Fine if you want to include numerous other references. But as one of three references intended to support the efficacy of your product? Is this a joke?

So Yan gets the Bogus Citation Prize of the Year: not only are his citations barely relevant and ancient, but are obviously deliberately cited without dates to keep them from looking as silly as they are.

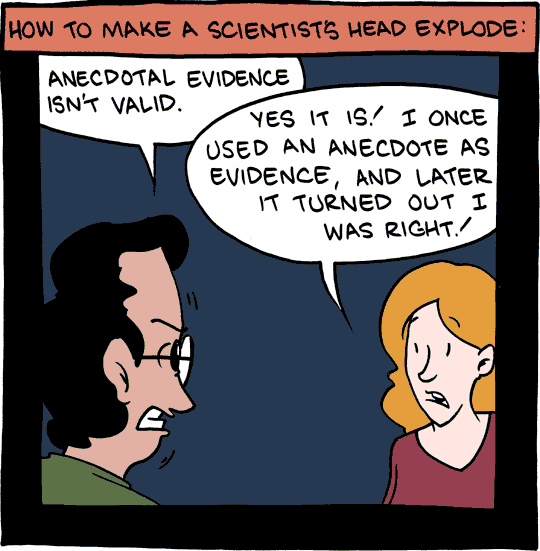

“The fake”

Anecdotal evidence isn’t real evidence … but it can kind of seem like real evidence if it’s in a footnote! Illustration used with the kind permission of Zach Weiner, of Saturday Morning Breakfast Cereal. Thanks, Zach!

Who cites so badly? Dramatis personae — motives and mechanisms for bogus citations

Most bogus citing is done by the people who have a vested interest in draping scientific credibility over their product/service like a satin wrestling robe. This group is by far the biggest and also the simplest. At one extreme, there’s most naked profiteering: corporate marketing departments generating bullet points for product pages. Only slightly less obviously, you have legions of freelance therapists hotly justifying their practices in social media fights, or flogging their services on their clinic blog and trying to put a coat of science paint on it.

There are stranger players, though…

The “scientists” — not actually, not relevant, or just bad

Bogus citations are often accompanied by strange claims of science expertise and credentials. This happens so often, especially on social media, that I have started to classify them. I think there is a spectrum you can simplify into three major types: the Bullshitter, The Lightweight, and the Bad Scientist.

The Bullshitter is just lying. They invent credentials and toss them around in the hopes that it will make them more credible, but they are so clueless that they don’t realize how transparent the lie is. For instance, someone who claims to have a degree in “science” obviously knows nothing about science, or they would have been more specific. It’s like a cat fluffing up to fool a human into thinking it’s bigger than it is — we know what you’re doing, kitty.

The Lightweight is technically telling the truth when they claim to be a scientist, but they are not the right kind, or they haven’t worked in the field for many years, or they’ve only been a scientist for about twenty minutes, or some other major caveat.

The Bad Scientist is a real scientist, someone working as a scientist in a relevant field. But even “real scientists” can be terrible at their jobs, and many are. Scientific competence goes way beyond just squeaking through a degree program. Real scientists can be sloppy and dishonest and even just not that bright; they can fall into pseudoscientific rabbit holes and disappear from legitimate science forever; and they can be remarkably ignorant of the history and process of science. Bottom line: most scientists aren’t a Carl Sagan or even a Bill Nye. Many aren’t even up to the level of the MythBusters. In short, many scientists are much more like technicians than the curiosity-driven polymaths and super dorks that have come to represent “scientist.”

The incompetents, dilettantes, and wannabes

Almost all bogus citations seem to be connected to selling something, and there’s no doubt a great many of them are perpetrated as cynically and consciously as hyperbolic marketing bullet points (hell, they routinely overlap). Lots of people do cite in bad faith, just like an unscrupulous undergrad stuffing barely-relevant citations into a term paper and hoping the prof won’t notice.

So are most bogus citations fraudulent and deceptive? Or just incompetent and delusional?12 It’s mostly a false dichotomy: there’s a surprising amount of overlap! Arguably, all deceptive citing is incompetent by definition — if you’re being deceptive, you’re doing it wrong.

But most important, I’m wary of attributing to malice that which can also be easily explained by foolishness (Hanlon’s razor).

The Internet is an extremely mixed blessing; the Information Age is also the Age of Terrible Signal-to-Noise Ratio. All forms of publishing have been democratized, resulting in infinite torrents of low-quality content, the scale of which would have been inconceivable to us in the 80s. A million virtual tons per day of amateur writing, photography, artwork, video … and “scholarship.” —

A lot of people are so ignorant of science and scholarship that the existence of paper on PubMed actually constitutes “evidence” in their minds, literally regardless of what the abstract actually says — never mind the full text! If it’s on PubMed, it “must be science,” and they truly think that’s good enough. Their standards are just absurdly low. Their bias is “surely science has my back” and the presence of a superficially relevant paper on PubMed is all it takes to confirm it.

This is how I know a lot of bogus citing is not only non-deceptive, but actually earnest: you can see the pride!

Many bogus citation perpetrators actually feel smug because they looked up something up on PubMed and linked to it. So much effort! While we’re rolling our eyes at their childish imitation of scholarship, they are looking in the mirror, fist-pumping, and telling themselves, “You beautiful badass — you made a science footnote!”

The Internet is clogged with people as academically sophisticated as Jesse Pinkman.

Better citations and footnotes

Here in the Salamander’s domain, there are hopefully not many bogus citations … and certainly none I know about.

Citations here are harvested and hand-crafted masterfully from only the finest pure organic heirloom artisan fair-trade sources. If I had a TV ad for this, there’d be oak barrels and a kindly old Italian gentleman wearing a leather apron and holding up sparkling citations in the dappled sunshine before uploading them to ye olde FTP server.

But how are you to believe this?

To actually trust citations without checking them yourself, you have to really trust the author.

This is why I have gone to considerable technological lengths on PainScience.com not just to cite my sources for every key point, but also to provide user-friendly links to the original material… which makes them easy to check! This is a basic principle of responsible publishing of health care information online.

If you’re not going to leverage technology to facilitate reference-checking, why even bother?

Did you find this article useful? Interesting? Maybe notice how there’s not much content like this on the Internet? That’s because it’s crazy hard to make it pay. Please support (very) independent science journalism with a donation. See the donation page for more information & options.

About Paul Ingraham

I am a science writer in Vancouver, Canada. I was a Registered Massage Therapist for a decade and the assistant editor of ScienceBasedMedicine.org for several years. I’ve had many injuries as a runner and ultimate player, and I’ve been a chronic pain patient myself since 2015. Full bio. See you on Facebook or Twitter., or subscribe:

Related Reading

- Therapy Babble — Hyperbolic, messy, pseudoscientific ideas about manual therapy for pain and injury rehab are all too common

- Ioannidis: Making Medical Science Look Bad Since 2005 — A famous and excellent scientific paper … with an alarmingly misleading title

- Statistical Significance Abuse — A lot of research makes scientific evidence seem much more “significant” than it is

- Why “Science”-Based Instead of “Evidence”-Based? — The rationale for making medicine based more on science and not just evidence… which is kinda weird

- Fake journals in the age of fake news: the dangers of predatory publishing - Healthy Debate, by Laurie Proulx, Manoj Lalu, Kelly Cobey, Donna Rubenstein (HealthyDebate.ca)

- Patently False: The Disinformation Over Coronavirus Patents, by Jonathan Jarry (McGill.ca), explores an excellent example of referencing theatre: citing patents that don’t actually support conspiracy theories.

What’s new in this article?

Twelve updates have been logged for this article since publication (2009). All PainScience.com updates are logged to show a long term commitment to quality, accuracy, and currency. more

When’s the last time you read a blog post and found a list of many changes made to that page since publication? Like good footnotes, this sets PainScience.com apart from other health websites and blogs. Although footnotes are more useful, the update logs are important. They are “fine print,” but more meaningful than most of the comments that most Internet pages waste pixels on.

I log any change to articles that might be of interest to a keen reader. Complete update logging of all noteworthy improvements to all articles started in 2016. Prior to that, I only logged major updates for the most popular and controversial articles.

See the What’s New? page for updates to all recent site updates.

2024 — I finally added “The Zombie.” Better late than never!

2022 — Added two sections: “Mechanism masturbation” and “Dozens of studies can still be wrong.”

2022 — No change in substance, but a bunch of editing and improvements to formatting and presentation.

2020 — New section, “Malice and fraud versus incompetence and self-delusion.”

2019 — New section, “Who cites so badly? Dramatis personae.” The bullshitter, the lightweight, and the bad scientist.

2018 — New section on cherry-picking and confirmation bias in evaluating studies. This article is now quite a comprehensive review of how citing can go wrong.

2018 — Tiny new section about non-obviously bogus citations: “What if a citation avoids all of these pitfall? It can still be bogus!”

2018 — Added two xkcd comics: “Dubious Study” and “New Study.” This article is now chock-a-block with comics! Probably more comics per square inch than any other page on PainScience.com.

2018 — Big update today: added thorough discussion of the problem of predatory journals. Added three new bogus citation types: the non-cite, the junk cite, and the dump cite. Added a short section about citations to NASA studies.

2017 — Added a brief acknowledgement of my own fallibility in the citation department.

2017 — Added two citations substantiating the prevalence of predatory/fraudulent journals.

2017 — Added a paragraph about the existence and purpose of the junk journal industry.

2009 — Publication.

Notes

- This footnote, for instance, in no way supports the statement it follows, and is present only for the purpose of being mildly amusing.

- It wasn’t a matter of interpretation or opinion. It was just way off: he had read much more into the paper than the researchers had ever intended anyone to get from it. The authors had clearly defined the limits of what could be interpreted from their evidence, and he had clearly exceeded those limits. It was a strong example of a big reach citation — #8 in my list below.

- Beall J. What I learned from predatory publishers. Biochem Med (Zagreb). 2017 Jun;27(2):273–278. PubMed 28694718 ❐ PainSci Bibliography 52870 ❐

ABSTRACT

This article is a first-hand account of the author's work identifying and listing predatory publishers from 2012 to 2017. Predatory publishers use the gold (author pays) open access model and aim to generate as much revenue as possible, often foregoing a proper peer review. The paper details how predatory publishers came to exist and shows how they were largely enabled and condoned by the open-access social movement, the scholarly publishing industry, and academic librarians. The author describes tactics predatory publishers used to attempt to be removed from his lists, details the damage predatory journals cause to science, and comments on the future of scholarly publishing.

- Gasparyan AY, Yessirkepov M, Diyanova SN, Kitas GD. Publishing Ethics and Predatory Practices: A Dilemma for All Stakeholders of Science Communication. J Korean Med Sci. 2015 Aug;30(8):1010–6. PubMed 26240476 ❐ PainSci Bibliography 52903 ❐ “Over the past few years, numerous illegitimate or predatory journals have emerged in most fields of science.”

McGill.ca Office for Science & Society [Internet]. Jarry J. Deceitful AI Videos Mislead Seniors on Important Health Issues; 2025 Dec 11 [cited 26 Jan 8]. PainSci Bibliography 49404 ❐

Jonathan Jarry reviewed several AI-generated health videos with millions of views for the McGill Office for Science & Society. The AI slop [Wikipedia] that he waded through was only a fraction of what is now spewing out of Asian content farms.

And the target audience? Senior citizens! People who barely know that AI slop exists, let alone how to spot it. Generative AI keeps shredding our assumptions about how much can be faked, and how fast, and this stuff seems more real to the naive just by virtue of its sheer quantity: surely so much content must be real!

It also all “seems legit” thanks particularly to copious fake citations:

I picked four such channels and checked every scientific reference their most popular videos listed to see if they existed. Out of 65 references, five were real. I was unable to find the 60 others. As with the Copenhagen non-study, the journals, volumes, and issues were usually dead-on: the AI is simply inserting fake papers into real pages. Occasionally, a journal was made up.

The result would be laughable if it weren’t so disturbingly effective.

“I picked four such channels and checked every scientific reference their most popular videos listed to see if they existed. Out of 65 references, five were real. I was unable to find the 60 others. As with the Copenhagen non-study, the journals, volumes, and issues were usually dead-on: the AI is simply inserting fake papers into real pages. Occasionally, a journal was made up.”

- My self-references aren’t circular: when I cite other articles, I’m always citing something else I’ve written that provides much more detail and many more citations. I’m essentially saying “I keep all of my citations on this topic in another place.” That is not the same thing as “the only evidence for this point is a longer version of this opinion on another page”!

- Ingraham. The legend of Boot Nail Guy reconsidered. PainScience.com. 1400 words.

- Ingraham. Ioannidis: Making Medical Science Look Bad Since 2005: A famous and excellent scientific paper … with an alarmingly misleading title. PainScience.com. 3272 words.

- Cuijpers P, Cristea IA. How to prove that your therapy is effective, even when it is not: a guideline. Epidemiol Psychiatr Sci. 2016 Oct;25(5):428–435. PubMed 26411384 ❐

Most of these were someone’s attempt to “prove” that their methods work, clinicians playing at research, using all kinds of tactics (mostly unconsciously) to get the results they wanted, such as (paraphrasing Cuijpers et al.):

- Sell it! Inflate expectations! Make sure everyone knows it’s the best therapy EVAR.

- But don’t compare to existing therapies!

- And reduce expectations of the trial: keep it small and call it a “pilot.”

- Use a waiting list control group.

- Analyse only subjects who finish, ignore the dropouts.

- Measure “success” in a variety of ways, but report only the good news.

And so on. And many of these tactics leave no trace, or none that’s easy to find.

- Blogs.jwatch.org [Internet]. Sax P. Predatory Journals Are Such a Big Problem It's Not Even Funny; 2018 June 14 [cited 18 Nov 28]. PainSci Bibliography 53235 ❐

- This is just like the abiding skeptical question: Does the spiritual medium know she’s a fraud, or does she actually think she can talk to the dead? Is the faith healer a con-artist or a true believer?