Confirmation Bias

Confirmation bias is the human habit of twisting our perceptions and thoughts to confirm what we want to believe

Confirmation bias explains a lot about human nature. Most people know it best in the form of “selective perception” or “selective memory” — hearing, seeing, and remembering only what you want to hear, see, and remember. It even works with the other senses, like touch: see palpatory pareidolia. Every cat owner knows how good felines are at hearing only what pleases and amuses them to hear.

But nobody does confirmation bias like the human animal: we employ a dazzling array of devious (and largely unconscious) mental tactics and thinking glitches that lead us all to confirm only the beliefs we cherish, our pet theories (example of a pet theory: “My dog is the best dog!”). We not only tend to ignore, deny, and overlook anything that contradicts our point of view, but we also inflate the importance of anything that supports it. And the smarter we are, the better we are at this.1

Confirmation bias is a major basic reason for the proliferation and persistence of bad ideas in all fields, but especially snake oil and quackery in medicine generally, and even more so in pain medicine where so few treatments are effective. Most popular treatments for pain are based more on wishful thinking than scientific plausibility and evidence, because we very strongly tend only the data that confirms what we want to believe. Both amateurs and experts alike are prone to significant thinking errors. There are people who consider it part of their job description to try to eliminate confirmation bias from their thinking — the best scientists and journalists, for instance — but it’s really difficult. Everyone has confirmation bias: it’s just how minds (don’t) work!

The first principle is that you must not fool yourself & you are the easiest person to fool.

Richard Feynman

Closely related thinking errors

We have many tools in our confirmation bias toolkit. The main mechanism of confirmation bias is selective thinking: emphasizing and remembering anything that confirms you bias, and ignoring and forgetting everything else. This is a fairly passive process.

But we can also take a more active role, cooking up theories on the spot to explain away anything that contradicts our beliefs: ad hoc hypothesisizing. When our beliefs are challenged by something more blatantly, sometimes we just double-down and believe more intensely: the backfire effect. We will do this because it is generally (much) easier to relieve the discomfort of cognitive dissonance by rejecting inconvenient facts than to change beliefs that are extremely important to our personality. Plus, it’s easy to turn to a community of believers for reinforcement and reassurance — our friends cheerfully help us confirm our biases. That’s communal reinforcement.

Motivated reasoning is the process of actively dialing our confirmation bias up to 11 — not just failing to see problems with our own beliefs, but actively looking for ways to prop them up. Ironically, intelligent people often do this extremely effectively, which is why smart people sometimes seem so bleedin’ stupid — because they are dedicating their intelligence to the challenge of motivated reasoning, using their brains for the wrong thing.

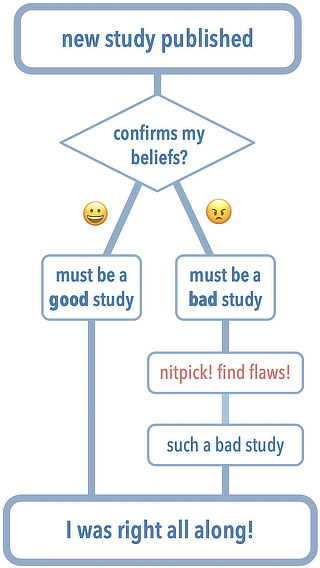

A simple example of confirmation bias: cherry picking and nitpicking of studies

When we see a citation to a scientific paper supporting something we believe, most people look no further: it’s enough that it confirms our bias. But citations routinely do not actually support what they seem to support — there are many kinds of 13 Kinds of Bogus Citations. People who cite science routinely cherry pick the evidence that supports their view, and nitpick (or simply ignore) the studies that challenge it. And since the reader is also unlikely to look more carefully at anything that confirms their bias, “everyone wins”! The citation is both published and consumed uncritically.

Skeptics are just as prone to this as “true believers” in classic quackeries. Most acupuncturists will cherry pick studies that support acupuncture and nitpick ones that undermine it… and most skeptics do exactly the opposite. Everyone tends to nitpick studies that seem to contradict their beliefs, whatever they may be… and celebrate the ones that seem to back them up.

A dramatic example: chiropractic bias confirmed in corpses

Old-school chiropractors still believe that problems with spines cause visceral disease. Although much of the profession has moved on, a surprisingly large faction (the “straights”) still clings to the old ways — pre-scientific nonsense, debunked every which way. Organ health does not depend on the state of spinal joints,2 and this is a cold, hard, medical fact, confirmed daily by everyone with serious spinal lesions, whose organs work just fine thank you very much.

And yet chiropractors routinely find “evidence” that “confirms” their bias. Or, in this case, even physicians who have bought into it (which used to much more common). And they’ve been doing it for a long time: this bizarre 1921 paper3 was cited in 2014 by a chiropractor.4

Dr. Henry Windsor of Haverford, PA, looked into 50 corpses, comparing their spinal curvatures to signs of related organ disease. By his own admission, he was basically eyeballing it and — shocker — “showed that 138 of the 139 diseases of the internal organs that were present were in connection to the misalignments of the vertebrae.” He was basically hallucinating in harmony with his theory. Dr. Mark Crislip (who wrote about this in more detail for of ScienceBasedMedicine.org):5

Without knowing how abnormal curvature is defined and how the spines were examined, as far as I can tell this is a massive example of confirmation bias. He saw what he wanted to see. … This is as curious an example of presuming causation from association as I have ever seen.

This ancient study would make a quaint, excellent example of confirmation bias in itself — but it’s deliciously bizarre (and sadly predictable) that a modern chiropractor would seriously cite the paper, re-confirming the same bias decades later.

About Paul Ingraham

I am a science writer in Vancouver, Canada. I was a Registered Massage Therapist for a decade and the assistant editor of ScienceBasedMedicine.org for several years. I’ve had many injuries as a runner and ultimate player, and I’ve been a chronic pain patient myself since 2015. Full bio. See you on Facebook or Twitter., or subscribe:

What’s new in this article?

Jun 8, 2023 — Added a small new section about confirmation bias as a driver of both cherry-picking and nitpicking of studies.

2012 — Publication.

Notes

- West RF, Meserve RJ, Stanovich KE. Cognitive sophistication does not attenuate the bias blind spot. J Pers Soc Psychol. 2012 Sep;103(3):506–19. PubMed 22663351 ❐

This paper is the scientific version of the classic Richard Feynman quote: “The first principle is that you must not fool yourself & you are the easiest person to fool.” It presents evidence that intelligence makes you better at fooling yourself: “none of these bias blind spots were attenuated by measures of cognitive sophistication such as cognitive ability or thinking dispositions related to bias. If anything, a larger bias blind spot was associated with higher cognitive ability.”

Are the little bundles of nerves that exit your spine the wellspring of all visceral vitality? Will your organs wilt like neglected house plants if those nerve roots are slightly impinged? No: cut a nerve root completely, and you’ll certainly paralyze something, but not an organ, because organs simply don’t depend on spinal nerve roots. And yet this is what many chiropractors believe, and would like their customers to believe, after a century of contradictory evidence. See Organ Health Does Not Depend on Spinal Nerves! One of the key selling points for chiropractic care is the anatomically impossible premise that your spinal nerve roots are important to your general health.

- Winsor H. Sympathetic Segmental Disturbances-II: The Evidence of the Associated, In Dissected Cadavers, of Visceral Disease with Vertebral Deformitis of the Spine of the Same Sympathetic Segments. The Medical Times. 1921. PainSci Bibliography 53775 ❐

- Chiropractic care can treat more than just bad backs. Dakota Country Star. Aug 13, 2014.

- ScienceBasedMedicine.org [Internet]. Crislip M. That’s so Chiropractic; 2014 Aug 23 [cited 14 Sep 16]. PainSci Bibliography 53772 ❐

A terrific read covering a lot of chiropractic ground: fantastical autopsy results, frightening autistic children, chiropractic vaccinations, and stroke and patient safety.